AI to upend melody making, teach artists how to please, Spotify tech guru says

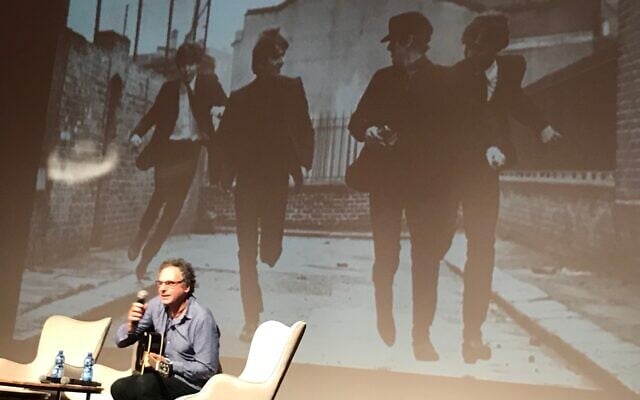

Spotify’s head of tech lab François Pachet shows audience in Tel Aviv how AI tools can make completely new music by mixing elements from top hits

Shoshanna Solomon was The Times of Israel's Startups and Business reporter

The use of artificial intelligence is set to revolutionize how people create music but still, robots will not replace humans in the art of making melodies, attendees of a conference about art and music were told on Sunday.

“Artificial intelligence will not replace good artists and composers,” François Pachet, a scientist, composer and the director of the Spotify Creator Technology Research Lab, told participants of the TechnoArt 2019 conference in Tel Aviv. “AI will change the way people make art, but it won’t replace them.”

Pachet is considered a pioneer of computer music, and specifically its interaction with AI. At Spotify he leads development of AI-based tools for musicians.

At the conference Pachet explained how as director of SONY Computer Science Laboratory in Paris, where he worked before joining Spotify, he and his team used artificial intelligence software — called Flow Machines — to make new kinds of music. For example, his team fed the software 45 Beatles songs and produced a new song, “Daddy’s Car,” based on the Fab Four’s style. The song is on YouTube and has not been commercialized, he said.

The AI system, Pachet explained, studied the notes and patterns of the Beatles songs and “then used it in a new context to create something new.”

“The song here is new; it is original and it is not doing any plagiarism — but yet it is using a lot of patterns and features of the style of the Beatles,” he said.

Pachet also used Flow Machines to create an album of 15 songs called “Hello World” — the first music album composed with artificial intelligence — together with French songwriter and producer Benoît Carré last year.

The album was streamed by 12 million users and music critics liked it, with the BBC calling it “the world’s first good robot album.”

Pachet’s work at Spotify has had him mixing genres — such as folk songs with gospel or jazz — to come up with new orchestrations that are very different from the originals. In another twist, his team might blend the rhythm of one song with the harmony of a second to create a “completely brand-new piece” of music, he said.

Critically, however, said Pachet, the decision of what to mix with what is still done by a human artist, and not by a machine. “That is the responsibility of the artist,” he said, and that will remain the difference between musical art and robotically developed music.

Outside of composition, artificial intelligence tools are used to gain insights into how consumers listen to music, Pachet said. In a study published in March, Pachet and two other researchers talk about the phenomenon of “music skipping.”

Online streaming services are a preferred way to consume music. But because there are so many songs available via these services, consumers tend to “skip” quickly from one song to another. This has allowed the researchers to create a “skip profile” — which shows how long people actually listen to music before skipping to the next song.

“Skipping is a crucial feature in understanding modern listening behaviors,” the researchers say in the paper. “For the first time in the history of musicology, researchers can systematically collect and analyze massive amounts of data about music listening behavior.”

Research data presented in the study shows that a quarter of all streamed songs are skipped within the first five seconds, 29% in the first 10 seconds, and 35% in the first 30 seconds, and only some 48% of all songs listened to in their entirety, Pachet said.

What the data showed, however, was that the skip profile for each song is the same every day, every week and every month for everyone included in the study.

“This means that skipping is not people centered, but it is because of the song, it is the signature of the song,” Pachet said.

The research showed a correlation between skipping and the structure of the music piece. With this information, he said, musicians will be able study how people react to their music and will be able to develop compositions that are better-received.

Music makers would be able to “manipulate their audience in a very precise way,” Pachet said. And “that is going to change a lot of things in future,” he said.

In addition, Pachet said, with the advancement of the use of AI tools in music, regulators may need to redefine the concept of what is considered “original” content, and perhaps create new copyright laws and a new royalties system for music that is reinvented by AI, but based on the remixing and reworking of originals.

The Times of Israel Community.