Bias claims spur debate on need for curbs, oversight of facial recognition tech

Official at Israeli startup AnyVision says he hopes for regulation of the industry because his firm’s software could never be abused, unlike open-source platforms

A global backlash against facial recognition technologies due to the bias found to be intrinsic in some of the tools has prompted one of Israel’s leading startups in the field to warn against a wholesale rejection of the field.

Adam Devine, chief marketing officer at Israel’s controversial AnyVision, a global leader in visual intelligence technology, said governments should allow only specialized providers — who know how to protect privacy and prevent bias — to sell facial recognition software. Nor should they be sold to organizations that are not going to use them correctly, he said.

But “don’t throw the baby out with the bathwater,” he urged.

AnyVision was recently accused by media of providing the Israel Defense Forces with tools for mass surveillance in the Palestinian territories. Microsoft in March divested its holdings in AnyVision, even though the tech giant said it couldn’t substantiate claims that the startup’s technology was used unethically after it hired a team of lawyers headed by former US attorney general Eric Holder looked into the matter.

Facial recognition technology as a whole has come under fire by civil liberties activists who say the tools are biased against people of color and infringe upon citizens’ privacy. The technology, which uses visual images to help computers identify people is in wide use, from unlocking phones to picking out a suspect’s face at borders or mass gatherings. Since increased use of the technology could help keep crime and terror in check, a global debate is now raging regarding the pros and cons of this technology.

On June 25, Boston became the second-largest city in the world after San Francisco to ban the use of facial recognition technology by police and city agencies, based on the idea that the technology is inaccurate when it comes to people of color.

Unable to tell Black people apart

Robert Williams, a Black man living in a suburb of Detroit, was arrested earlier this year at his home, in front of his wife and two young daughters, and locked up for nearly 30 hours. Facial recognition technology used by Michigan State Police had mistaken him for another Black man, because the tech used by the force is unable to tell Black people apart.

The only thing Williams had in common with the suspect caught by the surveillance camera of a watch shop was that they are both large-framed Black men, said the American Civil Liberties Union (ACLU). The organization last month called for lawmakers “to stop law enforcement use of face recognition technology.”

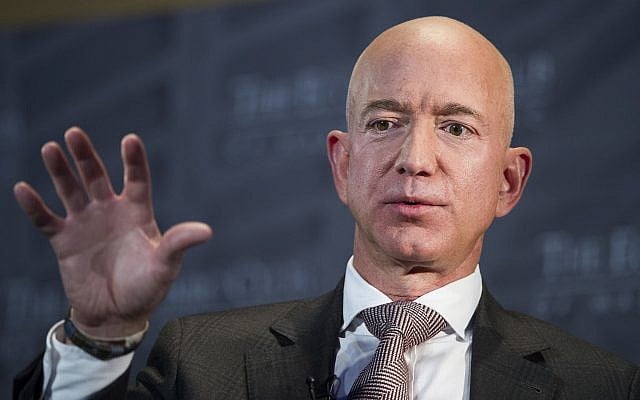

Williams’s story comes as tech firms in the US, including IBM, Amazon and Microsoft, were planning to pause or end sales of their facial recognition technology to police in the US. Amazon on June 10 announced a one-year pause on allowing law enforcement to use its Rekognition platform, two days after IBM said it would no longer offer its general-purpose facial recognition or analysis software, out of potential human rights, bias and privacy concerns.

“We’ve advocated that governments should put in place stronger regulations to govern the ethical use of facial recognition technology, and in recent days, Congress appears ready to take on this challenge,” Amazon said in a statement. “We hope this one-year moratorium might give Congress enough time to implement appropriate rules, and we stand ready to help if requested.”

The ACLU said the stand taken by these firms comes years after activists started to sound warnings about the technology.

“For years, racial justice and civil rights advocates had been warning that this technology in law enforcement hands would be the end of privacy as we know it,” ACLU director Kade Crockford wrote in a blog post on June 16, after the tech firms made their announcements. “It would supercharge police abuses, and it would be used to harm and target Black and brown communities in particular.”

“But the companies ignored these warnings and refused to get out of this surveillance business. It wasn’t until there was a national reckoning over anti-Black police violence and systemic racism, and these companies getting caught in activists’ crosshairs for their role in perpetuating racism, that the tech giants conceded — even if only a little,” Crockford wrote.

A 2018 MIT study that looked into three commercially released facial-analysis software products of major tech firms showed both a skin-type and gender bias. The software had an error rate of 0.8% in identifying the gender of light skinned men, as opposed to more than 34% for darker skinned women, for example.

AnyVision, a Holon, Israel-based startup founded in 2015 by Neil Robertson and Eylon Ethstein, uses artificial intelligence technology to recognize faces, bodies and objects for security, medical and business purposes, among others.

Its recognition technology software is used to “authenticate legitimate users and customers; detect targets; track criminals and terrorists; and locate missing persons in a wide range of settings, including banks, educational institutions, correctional facilities, airports, casinos, businesses, and special events,” according to the database of Start-Up Nation Central, which tracks the Israeli tech industry.

The firm had raised a total of $74 million from investors, including Microsoft’s venture capital arm, Qualcomm Ventures; LightSpeed Venture Partners; Robert Bosch GmbH; and Eldridge Industries, according to Start-Up Nation Central.

Last year Israeli business newspaper The Marker reported that the IDF uses technology provided by AnyVision at West Bank checkpoints, and in cameras dotting the Palestinian Authority. The cameras and database were being used to identify and track potential Palestinian assailants, the report said. Then, in October, an MSNBC report queried why Microsoft was funding an Israeli firm that helps surveil West Bank Palestinians.

The report led Microsoft to probe the matter, and in March this year the US tech giant said it was pulling out of its investment in AnyVision, even though its own investigation couldn’t substantiate claims that the startup’s technology was being used unethically.

“After careful consideration, Microsoft and AnyVision have agreed that it is in the best interest of both enterprises for Microsoft to divest its shareholding in AnyVision,” said Microsoft in a statement.

Besides divesting from the firm, Microsoft also said it will stop making minority investments in companies that sell facial-recognition technology.

Microsoft also said the audit underscored the challenges of being a minority investor in a company selling sensitive technology, because it may not have enough oversight or control over how the technology is used.

Now, headlines in the US and globally have further raised the heat against these technologies.

AnyVision’s Devine declined to comment on the Microsoft and AnyVision relationship beyond what the US tech giant said at the time of divestment. But he added that no other tech firm has pulled out from its investment in the Israeli startup and that “sales have not been impacted by the Microsoft divestiture.”

Interest in the company’s products, which have netted sales worth “many millions” of dollars around the world, is up, he said, thanks to a “desire to improve the safety of people and premises.”

Customers use AnyVision’s facial recognition technologies to open doors, for remote authentication and identification for a secure transaction and for security purposes, “to identify known people on watch lists to prevent threats.”

Shortly after the outbreak of the coronavirus, AnyVision installed thermal cameras at Sheba Medical Center in Ramat Gan, Israel’s largest hospital, to let officials spot hospital staff with a fever.

The facial recognition software can reportedly identify “in seconds” anyone who came into contact with an infected staffer, and allows officials to determine precisely who should go into isolation.

The backlash against facial recognition companies “is not affecting us in any in any material way right now, other than businesses and individuals are looking at our website,” Devine said. “We are probably getting more inbound interest as people really want to understand what facial recognition is actually about.”

Forcing the conversation

Devine said the recent headlines about facial recognition technologies is forcing a long-overdue conversation and, he hoped, legislation.

“It is good that this is happening… it will force organizations like the ACLU to really understand what good face recognition is and be able to differentiate it from bad facial recognition.” In fact, it will force “every player in the market to get educated,” Devine said.

He added that a high-quality facial recognition product, like those of AnyVision or some of its peers, is created with built-in privacy controls and “care around bias at its core,” with algorithms trained to “equally and accurately recognize every face, every gender and every orientation, without bias.”

“It is purely about education, like a child,” Devine said. “An algorithm is a blank slate. No person is born with bias; that bias is learned. In the same way, no algorithm is born with bias, it has to be trained in or trained out of the algorithm.”

In other words, an algorithm’s developers need to supply it with a wide enough range of training data.

The MIT study was looking at open-source algorithms that were “poorly trained,” he said, and that reflected poorly on the whole industry. Following the study, the public assumed that facial recognition was intrinsically biased. But that is an “uneducated, flawed statement,” he maintained.

The widespread concern today is that law enforcement officers are able to upload to open source platforms images of protesters and identify them so that they could be arrested.

There is a big difference between open source facial recognition technologies that are free for anyone to use, like those offered by some big tech firms, and those of specialized businesses like AnyVision, Devine said.

“There is no way our software or other software like ours could ever be used for that purpose,” Devine said. “It is technically not possible, based on the way we sell it and based on privacy controls.”

AnyVision’s software, for example, identifies only specific people that are already on a criminal or wanted watchlist, he said, and it has controls that prevent users from adding faces to watchlists without permission.

In addition, the software has automated bystander blurring, which ensures only the faces of people on watchlists are seen clearly.

Using AnyVision software, users cannot “reverse engineer” the identification of a person, by uploading a picture to find out who the person is, Devine said. And the software is engineered to “eliminate bias” with a core algorithm that was trained on the most diverse datasets in the most diverse, real-world environments, he said.

“At the moment, we have no police customers in the US. I wish we did,” said Devine. “I would like for every police organization in the entire world to be using the kind of facial recognition that we produce.”

Devine doesn’t worry that facial recognition technology will be banned completely. “Too much good has come for the safety of the world from safety recognition for it to be banned,” he said. “There may be a case where it is banned for certain cases, and I think that is fine.”

There should, however, be legislation in place requiring firms developing the algorithms to make sure they are accurate and bias-free, and setting out the specific cases in which the technology can be used.

Regulatory vacuum

“There is a regulatory vacuum” regarding facial recognition technologies, said Tehilla Shwartz Altshuler, a senior fellow at the Israel Democracy Institute who studies the impact of technology on democracy.

“There are no rules, not even ethical ones for now,” and the field is not regulated in any democratic country. “This vacuum needs to be solved. The harm connected to facial recognition is wider and deeper than we thought at the beginning.”

But banning facial recognition will only give countries like China an edge, she said. Western countries would be putting “our head in the sand, if we don’t develop them.”

The right thing to do at the moment, she said, is to “take an ethical pause,” as suggested by Amazon, to figure out how to solve the problems and set out criteria for going forward.

Strict regulations should limit what the technology can be used to look for, and it should never be allowed to infer additional private information about a person, such as a disease or a sexual leaning, Shwartz Altshuler said.

The algorithms also need to be “transparent,” Shwartz Altshuler added, with developers being open about what data they were trained on. This information may be difficult for the layman to understand, but professionals would be able to vet it. And there should always be the ability to have a human supervise the software’s conclusions, on a case-by-case basis, she said.

She died more than four decades ago, but Leah Goldberg remains a magnetic and enigmatic figure: Israel’s most beloved poet, a powerful woman who lived with her mother and never married, who reinvented herself from the ashes of World War I through her magical writing.

You can screen 'The Five Houses of Leah Goldberg' June 4-11. Join The Times of Israel Community today to support our work and watch this and other outstanding documentary films in our DocuNation series.

We’re really pleased that you’ve read X Times of Israel articles in the past month.

That’s why we started the Times of Israel - to provide discerning readers like you with must-read coverage of Israel and the Jewish world.

So now we have a request. Unlike other news outlets, we haven’t put up a paywall. But as the journalism we do is costly, we invite readers for whom The Times of Israel has become important to help support our work by joining The Times of Israel Community.

For as little as $6 a month you can help support our quality journalism while enjoying The Times of Israel AD-FREE, as well as accessing exclusive content available only to Times of Israel Community members.

Thank you,

David Horovitz, Founding Editor of The Times of Israel

The Times of Israel Community.