When Picasso drew Lady Gaga

A collaboration between researchers from Disney and an Israeli university helps create ‘real’ computer-generated sketches

Israeli researchers are working with the technology arm of Disney, the entertainment conglomerate, to develop software that learns artists’ drawing style and how they select features to highlight as they interpret a face into a portrait.

The technology, being developed by Disney Research and staff from the Interdisciplinary Center in Herzliya, is not only interesting from an artistic point of view, but also can help in developing artificial drawing tools, the team said.

“There’s something about an artist’s interpretation of a subject that people find compelling,” said Moshe Mahler, a digital artist at Disney Research, Pittsburgh. “We’re trying to capture that — to create a computer model of it — in a way that no one has done before.”

Mahler, together with Itamar Berger and Ariel Shamir of the Interdisciplinary Center, presented the technology at the recent ACM SIGGRAPH 2013, a huge computer graphics show, held this year in California. Jessica Hodgins, Vice President of Disney Research, and Elizabeth Carter, a Disney Research, Pittsburgh associate, also were part of the research team.

The system being developed by the team is different from other programs that can create line drawings from photos. Such programs offer a standard, “off-the-shelf,” depersonalized graphics result, akin to a trace — ignoring the artistic rendering and “special sauce” that a good sketch artist can add to a drawing.

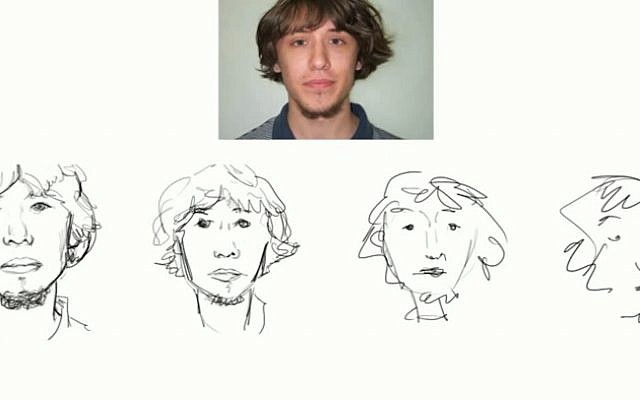

In order to capture that “sauce,” the team develop a database representing abstractions of a set of artists. To create the database, the researchers recruited seven artists. Each sketched portraits based on 24 photographs of male and female faces using a stylus pen that enabled the researchers to record each stroke. The artists created four sketches of each photo, with decreasing time intervals allowed for each — 270, 90, 30 and 15 seconds. The system also took into account stylus pressure, speed, length, curvature, and other unique “tics” exhibited by the artists.

The result was a dataset of 672 sketches at four abstraction levels, with about 8,000 strokes for each artist. Each stroke was categorized as a shading stroke or contour stroke (the strokes that precisely follow the curves and planes of an object), with the contour strokes subdivided into complex and simple strokes.

“Watching these strokes accumulate was an interesting part of this project,” Berger said. Some artists started with the eyes, others with the outlines of the face. One of the artists had no discernible pattern, starting “wherever.”

In addition, the system also evaluated the geometric interpretation of the face by the artist and how it is conveyed in the drawing. For instance, one artist consistently spaced eyes closer together in the sketches than they appear in reality, while another tended to draw wide jaws. The result, the team said, was that in a perceptual test in which participants were asked to match photos to “real” sketches, they were able to attribute a synthesized sketch to the artist on whose style it was based. The results demonstrated that the sketch generation method produced multiple, distinct styles that are similar to hand-drawn sketches, the team said.

What the program can’t do, Mahler said, is replicate the spontaneity of an artist and their ability to balance the drawing as they work. “Our approach only understands the trends of how an artist might work,” he added. And, because all of the artists based their work on photos, even the hand-drawn sketches were more constrained and reflected less personality than would have been the case if based on living, breathing subjects.

Still, the system could one day soon be used to develop software that could create better-looking cartoons and comics, to teach artists to correct bad habits by analyzing their style — or even to “bring back” talented artists of the past, based on duplications of an artist’s style by modern students.

Ever wonder what Picasso would have done with someone like Lady Gaga? Thanks to the Disney team, we may yet find out.

The Times of Israel Community.